Technology

Even the “Godmother of AI” has no idea what AGI is

Are you confused about artificial general intelligence or AGI? This is the thing that OpenAI is obsessive about, ultimately creating in a way that “benefits all of humanity.” They may be price taking seriously because they only raised $6.6 billion to catch up with to that goal.

But should you’re still wondering what the heck AGI is, you are not alone.

During a wide-ranging discussion at the Credo AI Leadership Summit on Thursday, Fei-Fei Li, a world-renowned researcher often called the “godmother of artificial intelligence,” said she too doesn’t know what AGI is. In other moments, Li discussed her role in the birth of modern artificial intelligence, how society should protect itself from advanced artificial intelligence models, and why she thinks her latest unicorn startup World Labs will change every part.

But when asked what she considered the “AI singularity,” Li was as confused as the rest of us.

“I come from an artificial intelligence academic background and was educated in more rigorous and evidence-based methods, so I don’t really know what all these words mean,” Li told a packed room in San Francisco, next to a big window with overlooking the river. Golden Gate Bridge. “Honestly, I do not even know what AGI stands for. Like people say you recognize it whenever you see it, I do not think I’ve seen it. The truth is that I do not spend quite a bit of time fascinated by these words because I believe there are far more essential things to do…

If anyone were to know what AGI is, it’s probably Fei-Fei Li. In 2006, she created ImageNet, the world’s first large AI training and benchmarking dataset, which has been instrumental in catalyzing the current AI boom. In 2017–2018, she served as Chief Scientist for Artificial Intelligence/ML at Google Cloud. Today, Li directs Stanford’s Human-Centered AI Institute (HAI), and her startup World Labs builds “large models of the world.” (This term is almost as confusing as AGI, should you ask me.)

OpenAI CEO Sam Altman attempted to define AGI in a profile “New Yorker”. last 12 months. Altman described AGI as “the equivalent of the average person you might hire as an associate.”

Meanwhile, OpenAI statute defines AGI as “highly autonomous systems that outperform humans in the most economically valuable work.”

Apparently these definitions weren’t adequate to work for a $157 billion company. This is how OpenAI was created five levels uses internally to measure its progress towards AGI. The first tier is chatbots (like ChatGPT), then thinkers (apparently OpenAI o1 was that tier), agents (which supposedly shall be next), innovators (AI that might help invent things), and the last organizational tier (AI that may do work of the entire organization).

Still confused? Me too, Li too. Plus, it looks as if quite a bit greater than the average co-worker could do.

Earlier in the conversation, Li said she was fascinated by the idea of intelligence from a young age. This led her to review artificial intelligence long before it became profitable. Li says she and several other others were quietly laying the foundations for the field in the early 2000s.

“In 2012, my ImageNet merged with AlexNet and GPUs – many individuals call it the birth of modern AI. They were based on three key ingredients: big data, neural networks and modern computations using graphics processors. I believe that when this moment got here, life in the entire field of artificial intelligence, and in our world, was never the same again.

When asked about California’s controversial artificial intelligence bill SB 1047, Li spoke fastidiously in order to not repeat the controversy that Governor Newsom had just put to rest by vetoing the bill last week. (We recently spoke with the writer of SB 1047 and he was more serious about reopening his argument with Li.)

“Some of you may know that I have been very vocal about my concerns about this bill (SB 1047) being vetoed, but at this moment I am thinking deeply and with great excitement about looking to the future,” Li said. “I was very honored, indeed honored, that Governor Newsom invited me to participate in the next steps after SB 1047.”

California’s governor recently tapped Li and other AI experts to form a task force to assist the state develop guardrails for AI deployment. Li said she takes an evidence-based approach on this role and can make every effort to support academic research and funding. But he also desires to be sure that California won’t punish technologists.

“We need to actually have a look at the potential impact on people and our communities, fairly than putting the blame on the technology itself… It would not make sense for us to penalize an automotive engineer – say Ford or GM – if a automotive is misused intentionally or unintentionally and harms a human being. Just punishing a automotive engineer won’t make cars safer. We must proceed to innovate for safer measures, but additionally improve the regulatory framework – whether it’s seat belts or speed limits – the same goes for artificial intelligence.”

This is one of the higher arguments I’ve heard against SB 1047, which might penalize tech firms for unsafe artificial intelligence models.

While Li advises California on artificial intelligence regulation, he also runs his startup World Labs in San Francisco. This is Li’s first time founding a startup, and she or he is one of the few women running a state-of-the-art artificial intelligence lab.

“We are far from a very diverse artificial intelligence ecosystem,” Li said. “I believe that diverse human intelligence will lead to diverse artificial intelligence and simply give us better technology.”

She is excited to bring “spatial intelligence” closer to reality in the next few years. Li argues that the development of human language, on which today’s large language models are based, probably took 1,000,000 years, while vision and perception probably took 540 million years. This implies that creating large models of the world is a far more complex task.

“It’s not just about enabling computers to see, but actually understanding the entire 3D world, which I call spatial intelligence,” Li said. “We don’t just see naming things… What we actually see is how to do things, how to move through the world, how to interact with each other, and closing the gap between seeing and doing requires spatial knowledge. As a technologist, I’m very excited about this.”

Technology

The latest model AI Google Gemma can work on phones

It grows “open” AI Google, Gemma, grows.

While Google I/O 2025 On Tuesday, Google removed Gemma 3N compresses, a model designed for “liquid” on phones, laptops and tablets. According to Google, available in a preview starting on Tuesday, Gemma 3N can support sound, text, paintings and flicks.

Models efficient enough to operate in offline mode and without the necessity to calculate within the cloud have gained popularity within the AI community lately. They will not be only cheaper to make use of than large models, but they keep privacy, eliminating the necessity to send data to a distant data center.

During the speech to I/O product manager, Gemma Gus Martins said that GEMMA 3N can work on devices with lower than 2 GB of RAM. “Gemma 3N shares the same architecture as Gemini Nano, and is also designed for incredible performance,” he added.

In addition to Gemma 3N, Google releases Medgemma through the AI developer foundation program. According to Medgemma, it’s essentially the most talented model to research text and health -related images.

“Medgemma (IS) OUR (…) A collection of open models to understand the text and multimodal image (health),” said Martins. “Medgemma works great in various imaging and text applications, thanks to which developers (…) could adapt the models to their own health applications.”

Also on the horizon there may be SignGEMMA, an open model for signaling sign language right into a spoken language. Google claims that Signgemma will allow programmers to create recent applications and integration for users of deaf and hard.

“SIGNGEMMA is a new family of models trained to translate sign language into a spoken text, but preferably in the American sign and English,” said Martins. “This is the most talented model of understanding sign language in history and we are looking forward to you-programmers, deaf and hard communities-to take this base and build with it.”

It is value noting that Gemma has been criticized for non -standard, non -standard license conditions, which in accordance with some developers adopted models with a dangerous proposal. However, this didn’t discourage programmers from downloading Gemma models tens of tens of millions of times.

.

(Tagstransate) gemma

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

Technology

Microsoft Nadella sata chooses chatbots on the podcasts

While the general director of Microsoft, Satya Nadella, says that he likes podcasts, perhaps he didn’t take heed to them anymore.

That the treat is approaching at the end longer profile Bloomberg NadellaFocusing on the strategy of artificial intelligence Microsoft and its complicated relations with Opeli. To illustrate how much she uses Copilot’s AI assistant in her day by day life, Nadella said that as a substitute of listening to podcasts, she now sends transcription to Copilot, after which talks to Copilot with the content when driving to the office.

In addition, Nadella – who jokingly described her work as a “E -Mail driver” – said that it consists of a minimum of 10 custom agents developed in Copilot Studio to sum up E -Mailes and news, preparing for meetings and performing other tasks in the office.

It seems that AI is already transforming Microsoft in a more significant way, and programmers supposedly the most difficult hit in the company’s last dismissals, shortly after Nadella stated that the 30% of the company’s code was written by AI.

(Tagstotransate) microsoft

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

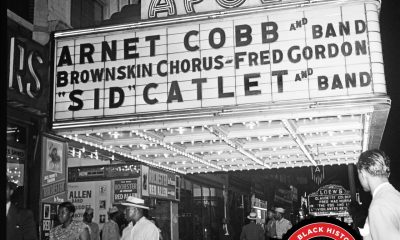

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary