Technology

Viggle Creates Controllable AI Characters for Memes and Idea Visualization

You might not be aware of Viggle AI, but you’ve got probably seen the viral memes it created. The Canadian AI startup is responsible for dozens of remix videos of rapper Lil Yachty bouncing around on stage at a summer music festival. In one among the videos, Lil Yachty was replaced by Joaquin Phoenix is the Joker. In one other, Jesus gave the impression to be stirring up the emotions of the group.. Users have created countless versions of this video, but one AI startup fueled the memes. And Viggle’s CEO says YouTube videos are fueling his AI models.

Viggle trained a 3D video foundation model, JST-1, to attain a “true understanding of physics,” the corporate says in its press release. Viggle CEO Hang Chu says a key difference between Viggle and other AI video models is that Viggle lets users specify the movement they need the characters to make. Other AI video models often create unrealistic character movements that don’t obey the laws of physics, but Chu says Viggle’s models are different.

“We’re basically building a new type of graphics engine, but only using neural networks,” Chu said in an interview. “The model itself is completely different from existing video generators, which are mostly pixel-based and don’t really understand the structure and properties of physics. Our model is designed to have that understanding, and so it’s much better in terms of controllability and generation efficiency.”

For example, to create a video of the Joker as Lil Yachty, simply upload the unique video (Lil Yachty dancing on stage) and a picture of the character (the Joker) to perform the move. Alternatively, users can upload images of the character together with text prompts with instructions on how one can animate them. As a 3rd option, Viggle lets users create animated characters from scratch using just text prompts.

But memes only make up a small percentage of Viggle’s users; Chu says the model has gained widespread adoption as a visualization tool for creators. The videos are removed from perfect—they’re shaky, the faces expressionless—but Chu says it’s proven effective for filmmakers, animators, and video game designers to show their ideas into something visual. Right now, Viggle’s models only create characters, but Chu hopes they’ll eventually enable more complex videos.

Viggle currently offers a free, limited version of its AI model on Discord and its web app. The company also offers a $9.99 subscription for more storage and provides some creators with special access through its Creator Program. The CEO says Viggle is in talks with film and video game studios about licensing the technology, but he also sees it being adopted by independent animators and content creators.

On Monday, Viggle announced that it has raised $19 million in a Series A round led by Andreessen Horowitz, with participation from Two Small Fish. The startup says the round will help Viggle scale, speed up product development, and expand its team. Viggle tells TechCrunch that it has partnered with Google Cloud, amongst other cloud providers, to coach and run its AI models. These partnerships with Google Cloud often include access to GPU and TPU clusters, but typically don’t include YouTube videos on which to coach the AI models.

Training data

During a TechCrunch interview with Chu, we asked what data Viggle’s AI video models were trained on.

“Until now, we have relied on publicly available data,” Chu said, echoing an identical line, OpenAI CTO Mira Murati responds to query about Sora’s training data.

When asked if Viggle’s training dataset included YouTube videos, Chu replied bluntly, “Yes.”

That might be an issue. In April, YouTube CEO Neal Mohan told Bloomberg that using YouTube videos to coach an AI video text generator could be “obvious violation” terms of use. The comments focused on OpenAI’s potential use of YouTube videos to coach Sora.

Mohan explained that Google, which owns YouTube, could have agreements with some creators to make use of their videos in training data sets for Google DeepMind’s Gemini. However, collecting videos from the platform is just not permitted, based on Mohan and YouTube Terms of Servicewithout obtaining prior consent from the corporate.

Following TechCrunch’s interview with Viggle’s CEO, a Viggle spokesperson emailed to retract Chu’s statement, telling TechCrunch that the CEO “spoke too soon about whether Viggle uses YouTube data for training. In fact, Hang/Viggle is not in a position to share the details of its training data.”

However, we noted that Chu has already done this officially and asked for a transparent statement on the matter. A Viggle spokesperson confirmed in his response that the AI startup trains on YouTube videos:

Viggle uses various public sources, including YouTube, to generate AI content. Our training data has been fastidiously curated and refined, ensuring compliance with all terms of service throughout the method. We prioritize maintaining strong relationships with platforms like YouTube and are committed to adhering to their terms, avoiding mass downloads and every other activities that will involve unauthorized video downloads.

This approach to compliance seems to contradict Mohan’s comments in April that YouTube’s video corpus is just not a public source. We reached out to YouTube and Google spokespeople but have yet to listen to back.

The startup joins others within the gray area of using YouTube as training data. It has been reported that multiple AI model developers – including OpenAI, Nvidia, Apple and Anthropic – everyone uses video transcripts or YouTube clips for training purposes. It’s a grimy secret in Silicon Valley that is not so secret: Everyone probably does it. What’s really rare is when people say it out loud.

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

Technology

Microsoft Nadella sata chooses chatbots on the podcasts

While the general director of Microsoft, Satya Nadella, says that he likes podcasts, perhaps he didn’t take heed to them anymore.

That the treat is approaching at the end longer profile Bloomberg NadellaFocusing on the strategy of artificial intelligence Microsoft and its complicated relations with Opeli. To illustrate how much she uses Copilot’s AI assistant in her day by day life, Nadella said that as a substitute of listening to podcasts, she now sends transcription to Copilot, after which talks to Copilot with the content when driving to the office.

In addition, Nadella – who jokingly described her work as a “E -Mail driver” – said that it consists of a minimum of 10 custom agents developed in Copilot Studio to sum up E -Mailes and news, preparing for meetings and performing other tasks in the office.

It seems that AI is already transforming Microsoft in a more significant way, and programmers supposedly the most difficult hit in the company’s last dismissals, shortly after Nadella stated that the 30% of the company’s code was written by AI.

(Tagstotransate) microsoft

Technology

The planned Openai data center in Abu Dhabi would be greater than Monaco

Opeli is able to help in developing a surprising campus of the 5-gigawatt data center in Abu Dhabi, positioning the corporate because the fundamental tenant of anchor in what can grow to be considered one of the biggest AI infrastructure projects in the world, in accordance with the brand new Bloomberg report.

Apparently, the thing would include a tremendous 10 square miles and consumed power balancing five nuclear reactors, overshadowing the prevailing AI infrastructure announced by OpenAI or its competitors. (Opeli has not yet asked TechCrunch’s request for comment, but in order to be larger than Monaco in retrospect.)

The ZAA project, developed in cooperation with the G42-Konglomerate with headquarters in Abu Zabi- is an element of the ambitious Stargate OpenAI project, Joint Venture announced in January, where in January could see mass data centers around the globe supplied with the event of AI.

While the primary Stargate campus in the United States – already in Abilene in Texas – is to realize 1.2 gigawatts, this counterpart from the Middle East will be more than 4 times.

The project appears among the many wider AI between the USA and Zea, which were a few years old, and annoyed some legislators.

OpenAI reports from ZAA come from 2023 Partnership With G42, the pursuit of AI adoption in the Middle East. During the conversation earlier in Abu Dhabi, the final director of Opeli, Altman himself, praised Zea, saying: “He spoke about artificial intelligence Because it was cool before. “

As in the case of a big a part of the AI world, these relationships are … complicated. Established in 2018, G42 is chaired by Szejk Tahnoon Bin Zayed Al Nahyan, the national security advisor of ZAA and the younger brother of this country. His embrace by OpenAI raised concerns at the top of 2023 amongst American officials who were afraid that G42 could enable the Chinese government access advanced American technology.

These fears focused on “G42”Active relationships“With Blalisted entities, including Huawei and Beijing Genomics Institute, in addition to those related to people related to Chinese intelligence efforts.

After pressure from American legislators, CEO G42 told Bloomberg At the start of 2024, the corporate modified its strategy, saying: “All our Chinese investments that were previously collected. For this reason, of course, we no longer need any physical presence in China.”

Shortly afterwards, Microsoft – the fundamental shareholder of Opeli together with his own wider interests in the region – announced an investment of $ 1.5 billion in G42, and its president Brad Smith joined the board of G42.

(Tagstransate) Abu dhabi

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

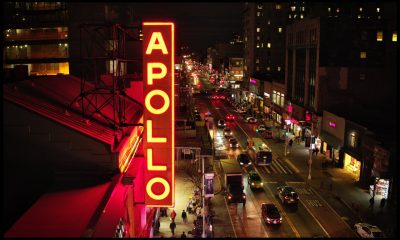

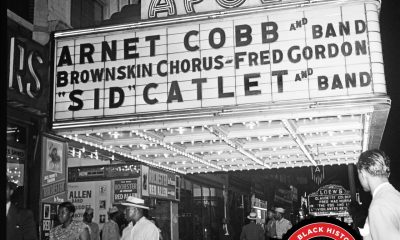

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary