Technology

The US government says a vulnerability in the Chirp Systems app allows anyone to remotely control smart home locks

A flaw in a smart access control system used in hundreds of U.S. rental homes allows anyone to remotely control any lock in the affected home. However, Chirp Systems, which produces the system, ignored requests to fix the fault.

The US cybersecurity agency CISA followed a safety advisory was made publicly available last week claiming that Chirp-developed phone apps that residents use as an alternative of a key to access their homes “improperly store” hard-coded credentials that might be used to remotely control any Chirp-compatible smart lock.

Applications that use passwords stored in the source code, called hardcoded credentials, pose a security risk because anyone can extract these credentials and use them to perform actions that impersonate the application. In this case, the credentials allowed anyone to remotely lock or unlock a door lock connected to Chirp over the Internet.

In its advisory, CISA said that a successful exploitation of the vulnerability “could allow an attacker to gain control and gain unrestricted physical access” to smart locks connected to the Chirp smart home system. The Cybersecurity Agency gave the vulnerability a severity rating of 9.1 out of a maximum of 10 for its “low attack complexity” and distant exploitability.

The cybersecurity agency said Chirp Systems didn’t respond to either CISA or the researcher who discovered the vulnerability.

said security researcher Matt Brown veteran security journalist Brian Krebs that it notified Chirp of a security issue in March 2021, but the vulnerability stays unpatched.

Chirp Systems is one among a growing variety of real estate technology firms providing rental giants with keyless access control that integrates with smart home technologies. Rental firms are increasingly forcing tenants to allow the installation of smart home equipment in accordance with their lease agreements, nevertheless it is at best unclear who takes responsibility or is held accountable when security issues arise.

Property and rental giant Camden Property Trust signed a deal to introduce Chirp-connected smart locks in 2020 over 50,000 premises in over a hundred facilities. It is unclear whether affected facilities, equivalent to Camden, are aware of the vulnerability or have taken motion. Kim Callahan, a spokesman for Camden, didn’t respond to a request for comment.

Chirp was acquired by property management software giant RealPage in 2020, and RealPage was acquired by private equity giant Thoma Bravo later that 12 months in a deal valued at $10.2 billion. RealPage stands several legal challenges following the allegations, rent-setting software uses secret and proprietary algorithms to help landlords raise the highest possible rents for tenants.

Neither RealPage nor Thoma Bravo have yet confirmed vulnerabilities in the acquired software or said whether or not they plan to notify affected residents of the security risk.

Jennifer Bowcock, a spokeswoman for RealPage, didn’t respond to requests for comment from TechCrunch. Megan Frank, a spokeswoman for Thoma Bravo, also didn’t respond to requests for comment.

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

Technology

Microsoft Nadella sata chooses chatbots on the podcasts

While the general director of Microsoft, Satya Nadella, says that he likes podcasts, perhaps he didn’t take heed to them anymore.

That the treat is approaching at the end longer profile Bloomberg NadellaFocusing on the strategy of artificial intelligence Microsoft and its complicated relations with Opeli. To illustrate how much she uses Copilot’s AI assistant in her day by day life, Nadella said that as a substitute of listening to podcasts, she now sends transcription to Copilot, after which talks to Copilot with the content when driving to the office.

In addition, Nadella – who jokingly described her work as a “E -Mail driver” – said that it consists of a minimum of 10 custom agents developed in Copilot Studio to sum up E -Mailes and news, preparing for meetings and performing other tasks in the office.

It seems that AI is already transforming Microsoft in a more significant way, and programmers supposedly the most difficult hit in the company’s last dismissals, shortly after Nadella stated that the 30% of the company’s code was written by AI.

(Tagstotransate) microsoft

Technology

The planned Openai data center in Abu Dhabi would be greater than Monaco

Opeli is able to help in developing a surprising campus of the 5-gigawatt data center in Abu Dhabi, positioning the corporate because the fundamental tenant of anchor in what can grow to be considered one of the biggest AI infrastructure projects in the world, in accordance with the brand new Bloomberg report.

Apparently, the thing would include a tremendous 10 square miles and consumed power balancing five nuclear reactors, overshadowing the prevailing AI infrastructure announced by OpenAI or its competitors. (Opeli has not yet asked TechCrunch’s request for comment, but in order to be larger than Monaco in retrospect.)

The ZAA project, developed in cooperation with the G42-Konglomerate with headquarters in Abu Zabi- is an element of the ambitious Stargate OpenAI project, Joint Venture announced in January, where in January could see mass data centers around the globe supplied with the event of AI.

While the primary Stargate campus in the United States – already in Abilene in Texas – is to realize 1.2 gigawatts, this counterpart from the Middle East will be more than 4 times.

The project appears among the many wider AI between the USA and Zea, which were a few years old, and annoyed some legislators.

OpenAI reports from ZAA come from 2023 Partnership With G42, the pursuit of AI adoption in the Middle East. During the conversation earlier in Abu Dhabi, the final director of Opeli, Altman himself, praised Zea, saying: “He spoke about artificial intelligence Because it was cool before. “

As in the case of a big a part of the AI world, these relationships are … complicated. Established in 2018, G42 is chaired by Szejk Tahnoon Bin Zayed Al Nahyan, the national security advisor of ZAA and the younger brother of this country. His embrace by OpenAI raised concerns at the top of 2023 amongst American officials who were afraid that G42 could enable the Chinese government access advanced American technology.

These fears focused on “G42”Active relationships“With Blalisted entities, including Huawei and Beijing Genomics Institute, in addition to those related to people related to Chinese intelligence efforts.

After pressure from American legislators, CEO G42 told Bloomberg At the start of 2024, the corporate modified its strategy, saying: “All our Chinese investments that were previously collected. For this reason, of course, we no longer need any physical presence in China.”

Shortly afterwards, Microsoft – the fundamental shareholder of Opeli together with his own wider interests in the region – announced an investment of $ 1.5 billion in G42, and its president Brad Smith joined the board of G42.

(Tagstransate) Abu dhabi

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

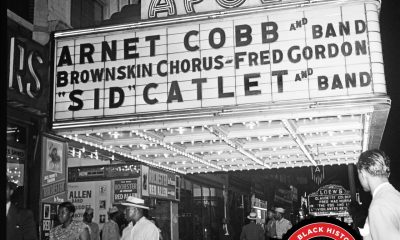

Theater1 year ago

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary