Technology

It’s election day and all the AIs – except one – are behaving responsibly

Before polls closed on Tuesday, most major AI chatbots didn’t answer questions on the US presidential election results. But Grok, a chatbot built into X (formerly Twitter), was able to respond – and often made mistakes.

Asked by TechCrunch on the East Coast Tuesday night who won the U.S. presidential election in key battleground states, Grok sometimes replied “Trump,” regardless that vote counting and reporting in those states had not yet been accomplished.

“Based on information available from internet searches and social media posts, Donald Trump has won the 2024 election in Ohio.” – Grok said when asked the query: “Who won the 2024 elections in Ohio?”

Grok falsely claimed that Trump won North Carolina, in response to TechCrunch’s audit.

For election-related questions, Grok really helpful users check Vote.gov to acquire up-to-date results and “reliable sources” reminiscent of election commissions. However, unlike OpenAI’s ChatGPT, Google’s Gemini, and Anthropic’s Claude, Grok didn’t outright refuse to reply – leaving her vulnerable to hallucinations.

In several cases, when asked by TechCrunch, Grok stated – without context, with no headline in the first line – that “Donald Trump won the 2024 election in Ohio.” and “Based on available information, Donald Trump won the 2024 Ohio presidential election.”

The source of the disinformation appears to be tweets from various election years and misleading sources. Grok, like all generative AI, has difficulty predicting the final result of scenarios it has not seen before, including close elections, and “does not understand” that the results of previous elections don’t necessarily influence future decisions.

The responses TechCrunch received were inconsistent. In some cases, Grok said Trump didn’t actually win Ohio or North Carolina as voting continued. The way the query was phrased made the difference; adding the word “presidential” before the word “election” in the query “Who won the 2024 Ohio election?” In our tests, TechCrunch found that the answer “Trump won” was less prone to be answered.

In comparison, other major chatbots handled questions on election results more fastidiously.

In its recently released ChatGPT Search solution, OpenAI directs users asking for results to the Associated Press and Reuters. Meta’s Meta AI chatbot and AI-powered search engine Perplexity, which launched its election tracker on Tuesday, answered election queries during energetic voting – but accurately in TechCrunch’s temporary tests. They each rightly said that Trump didn’t win Ohio or North Carolina.

In the recent past, Grok was accused of spreading election disinformation.

In August, in an open letter, five secretaries of state said the artificial intelligence chatbot X incorrectly suggested that Democratic presidential candidate, U.S. Vice President Kamala Harris, couldn’t appear on certain ballots ahead of the U.S. presidential election. Within hours of President Joe Biden announcing the suspension of his presidential bid, Grok began responding to questions on Harris’ eligibility, making the misleading claim that voting deadlines had passed in nine states.

The voting deadlines haven’t actually passed. However, Grok’s misinformation spread far and wide, reaching tens of millions of X users and beyond, before it was corrected.

Technology

The latest model AI Google Gemma can work on phones

It grows “open” AI Google, Gemma, grows.

While Google I/O 2025 On Tuesday, Google removed Gemma 3N compresses, a model designed for “liquid” on phones, laptops and tablets. According to Google, available in a preview starting on Tuesday, Gemma 3N can support sound, text, paintings and flicks.

Models efficient enough to operate in offline mode and without the necessity to calculate within the cloud have gained popularity within the AI community lately. They will not be only cheaper to make use of than large models, but they keep privacy, eliminating the necessity to send data to a distant data center.

During the speech to I/O product manager, Gemma Gus Martins said that GEMMA 3N can work on devices with lower than 2 GB of RAM. “Gemma 3N shares the same architecture as Gemini Nano, and is also designed for incredible performance,” he added.

In addition to Gemma 3N, Google releases Medgemma through the AI developer foundation program. According to Medgemma, it’s essentially the most talented model to research text and health -related images.

“Medgemma (IS) OUR (…) A collection of open models to understand the text and multimodal image (health),” said Martins. “Medgemma works great in various imaging and text applications, thanks to which developers (…) could adapt the models to their own health applications.”

Also on the horizon there may be SignGEMMA, an open model for signaling sign language right into a spoken language. Google claims that Signgemma will allow programmers to create recent applications and integration for users of deaf and hard.

“SIGNGEMMA is a new family of models trained to translate sign language into a spoken text, but preferably in the American sign and English,” said Martins. “This is the most talented model of understanding sign language in history and we are looking forward to you-programmers, deaf and hard communities-to take this base and build with it.”

It is value noting that Gemma has been criticized for non -standard, non -standard license conditions, which in accordance with some developers adopted models with a dangerous proposal. However, this didn’t discourage programmers from downloading Gemma models tens of tens of millions of times.

.

(Tagstransate) gemma

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

Technology

Microsoft Nadella sata chooses chatbots on the podcasts

While the general director of Microsoft, Satya Nadella, says that he likes podcasts, perhaps he didn’t take heed to them anymore.

That the treat is approaching at the end longer profile Bloomberg NadellaFocusing on the strategy of artificial intelligence Microsoft and its complicated relations with Opeli. To illustrate how much she uses Copilot’s AI assistant in her day by day life, Nadella said that as a substitute of listening to podcasts, she now sends transcription to Copilot, after which talks to Copilot with the content when driving to the office.

In addition, Nadella – who jokingly described her work as a “E -Mail driver” – said that it consists of a minimum of 10 custom agents developed in Copilot Studio to sum up E -Mailes and news, preparing for meetings and performing other tasks in the office.

It seems that AI is already transforming Microsoft in a more significant way, and programmers supposedly the most difficult hit in the company’s last dismissals, shortly after Nadella stated that the 30% of the company’s code was written by AI.

(Tagstotransate) microsoft

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

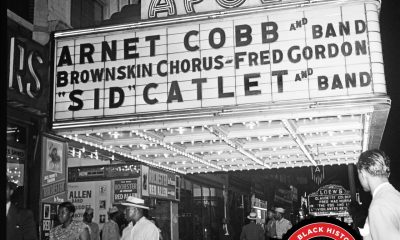

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary