Technology

CTGT aims to make AI models safer

Growing up as an immigrant, Cyril Gorlla taught himself how to code and practiced as if he were possessed.

“At age 11, I successfully completed a coding course at my mother’s college, amid periodic home media disconnections,” he told TechCrunch.

In highschool, Gorlla learned about artificial intelligence and have become so obsessive about the concept of training his own AI models that he took his laptop apart to improve its internal cooling. This tinkering led Gorlla to an internship at Intel during his sophomore 12 months of faculty, where he researched the optimization and interpretation of artificial intelligence models.

Gorlla’s college years coincided with the synthetic intelligence boom – during which firms like OpenAI raised billions of dollars for artificial intelligence technology. Gorlla believed that artificial intelligence had the potential to transform entire industries. But he also felt that safety work was taking a backseat to shiny latest products.

“I felt there needed to be a fundamental change in the way we understand and train artificial intelligence,” he said. “Lack of certainty and trust in model outputs poses a significant barrier to adoption in industries such as healthcare and finance, where AI can make the most difference.”

So, together with Trevor Tuttle, whom he met during his undergraduate studies, Gorlla left the graduate program to found CTGT, an organization that will help organizations implement artificial intelligence more thoughtfully. CTGT presented today at TechCrunch Disrupt 2024 as a part of the Startup Battlefield competition.

“My parents think I go to school,” he said. “It might be a shock for them to read this.”

CTGT works with firms to discover biased results and model hallucinations and tries to address their root cause.

It will not be possible to completely eliminate errors from the model. However, Gorlla says CTGT’s audit approach may help firms mitigate them.

“We reveal the model’s internal understanding of concepts,” he explained. “While a model that tells the user to add glue to a recipe may seem funny, the reaction of recommending a competitor when a customer asks for a product comparison is not so trivial. Providing a patient with outdated information from a clinical trial or a credit decision made on the basis of hallucinations is unacceptable.”

Recent vote from Cnvrg found that reliability is a top concern for enterprises deploying AI applications. In a separate one test At risk management software provider Riskonnect, greater than half of executives said they were concerned that employees would make decisions based on inaccurate information from artificial intelligence tools.

The idea of a dedicated platform for assessing the decision-making technique of an AI model will not be latest. TruEra and Patronus AI are among the many startups developing tools for interpreting model behavior, as are Google and Microsoft.

Gorlla, nonetheless, argues that CTGT techniques are more efficient — partly because they don’t depend on training “evaluative” artificial intelligence to monitor models in production.

“Our mathematically guaranteed interpretability is different from current state-of-the-art methods, which are inefficient and require training hundreds of other models to gain model insight,” he said. “As firms grow to be increasingly aware of computational costs and enterprise AI moves from demos to delivering real value, our worth proposition is important as we offer firms with the flexibility to rigorously test the safety of advanced AI without having to train additional models or evaluate other models . “

To address potential customers’ concerns about data breaches, CTGT offers an on-premises option as well as to its managed plan. He charges the identical annual fee for each.

“We do not have access to customer data, which gives them full control over how and where it is used,” Gorlla said.

CTGT, graduate Character labs accelerator, has the support of former GV partners Jake Knapp and John Zeratsky (co-founders of Character VC), Mark Cuban and Zapier co-founder Mike Knoop.

“Artificial intelligence that cannot explain its reasoning is not intelligent enough in many areas where complex rules and requirements apply,” Cuban said in a press release. “I invested in CTGT because it solves this problem. More importantly, we are seeing results in our own use of AI.”

And – although CTGT is in its early stages – it has several clients, including three unnamed Fortune 10 brands. Gorlla says CTGT worked with considered one of these firms to minimize bias in its facial recognition algorithm.

“We identified a flaw in the model that was focusing too much on hair and clothing to make predictions,” he said. “Our platform provided practitioners with instant knowledge without the guesswork and time waste associated with traditional interpretation methods.”

In the approaching months, CTGT will concentrate on constructing the engineering team (currently only Gorlla and Tuttle) and improving the platform.

If CTGT manages to gain a foothold within the burgeoning marketplace for AI interpretation capabilities, it could possibly be lucrative indeed. Markets and Markets analytical company projects that “explainable AI” as a sector could possibly be value $16.2 billion by 2028.

“The size of the model is much larger Moore’s Law and advances in AI training chips,” Gorlla said. “This means we need to focus on a fundamental understanding of AI to deal with both the inefficiencies and the increasingly complex nature of model decisions.”

Technology

The next large Openai plant will not be worn: Report

Opeli pushed generative artificial intelligence into public consciousness. Now it might probably develop a very different variety of AI device.

According to WSJ reportThe general director of Opeli, Altman himself, told employees on Wednesday that one other large product of the corporate would not be worn. Instead, it will be compact, without the screen of the device, fully aware of the user’s environment. Small enough to sit down on the desk or slot in your pocket, Altman described it each as a “third device” next to MacBook Pro and iPhone, in addition to “Comrade AI” integrated with on a regular basis life.

The preview took place after the OpenAI announced that he was purchased by IO, a startup founded last 12 months by the previous Apple Joni Ive designer, in a capital agreement value $ 6.5 billion. I will take a key creative and design role at Openai.

Altman reportedly told employees that the acquisition can ultimately add 1 trillion USD to the corporate conveyorsWearing devices or glasses that got other outfits.

Altman reportedly also emphasized to the staff that the key would be crucial to stop the copying of competitors before starting. As it seems, the recording of his comments leaked to the journal, asking questions on how much he can trust his team and the way rather more he will be able to reveal.

(Tagstotransate) devices

Technology

The latest model AI Google Gemma can work on phones

It grows “open” AI Google, Gemma, grows.

While Google I/O 2025 On Tuesday, Google removed Gemma 3N compresses, a model designed for “liquid” on phones, laptops and tablets. According to Google, available in a preview starting on Tuesday, Gemma 3N can support sound, text, paintings and flicks.

Models efficient enough to operate in offline mode and without the necessity to calculate within the cloud have gained popularity within the AI community lately. They will not be only cheaper to make use of than large models, but they keep privacy, eliminating the necessity to send data to a distant data center.

During the speech to I/O product manager, Gemma Gus Martins said that GEMMA 3N can work on devices with lower than 2 GB of RAM. “Gemma 3N shares the same architecture as Gemini Nano, and is also designed for incredible performance,” he added.

In addition to Gemma 3N, Google releases Medgemma through the AI developer foundation program. According to Medgemma, it’s essentially the most talented model to research text and health -related images.

“Medgemma (IS) OUR (…) A collection of open models to understand the text and multimodal image (health),” said Martins. “Medgemma works great in various imaging and text applications, thanks to which developers (…) could adapt the models to their own health applications.”

Also on the horizon there may be SignGEMMA, an open model for signaling sign language right into a spoken language. Google claims that Signgemma will allow programmers to create recent applications and integration for users of deaf and hard.

“SIGNGEMMA is a new family of models trained to translate sign language into a spoken text, but preferably in the American sign and English,” said Martins. “This is the most talented model of understanding sign language in history and we are looking forward to you-programmers, deaf and hard communities-to take this base and build with it.”

It is value noting that Gemma has been criticized for non -standard, non -standard license conditions, which in accordance with some developers adopted models with a dangerous proposal. However, this didn’t discourage programmers from downloading Gemma models tens of tens of millions of times.

.

(Tagstransate) gemma

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

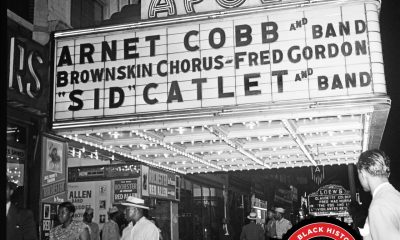

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary