Technology

This week in AI: Generative AI spams academic journals

Hello guys, welcome to TechCrunch’s regular AI newsletter.

This week in AI, generative AI is beginning to spam academic publications, a discouraging recent development on the disinformation front.

IN post about retraction watchblog tracking the recent retreat from academic research, assistant professors of philosophy Tomasz Żuradzk and Leszek Wroński wrote about three journals published by Addleton Academic Publishers that appear to consist entirely of AI-generated articles.

Magazines feature articles following the identical template, stuffed with buzzwords like “blockchain,” “metaverse,” “internet of things,” and “deep learning.” They list the identical editorial committee – 10 members of that are deceased – and an not easily seen address in Queens, New York, that appears to be home.

So what is going on on? You can ask. Isn’t viewing AI-generated spam simply a value of doing business online lately?

Yeah. But the fake journals show how easily the systems used to guage researchers for promotions and tenure might be fooled – and this could possibly be a motivator for knowledge employees in other industries.

In a minimum of one widely used rating system, CiteScore, journals rank in the highest ten for philosophy research. How is it possible? They quote one another at length. (CiteScore includes citations in its calculations). Żuradzk and Wroński state that of the 541 citations in considered one of Addleton’s journals, 208 come from other false publications of the publisher.

“(These rankings) often serve as indicators of research quality for universities and funding institutions,” Żuradzk and Wroński wrote. “They play a key role in decisions about academic rewards, hiring and promotion, and thus can influence researchers’ publication strategies.”

You could argue that CiteScore is the issue – it’s clearly a flawed metric. And this just isn’t a false argument. However, it just isn’t incorrect to say that generative AI and its abuse are disrupting the systems on which individuals’s lives depend in unexpected – and potentially quite harmful – ways.

There is a future in which generative AI forces us to rethink and redesign systems like CiteScore to be more equitable, holistic and inclusive. The bleaker alternative – and the one which exists today – is a future in which generative AI continues to run amok, wreaking havoc and ruining work lives.

I hope we’ll correct course soon.

News

DeepMind soundtrack generator: DeepMind, Google’s artificial intelligence research lab, says it’s developing artificial intelligence technology to generate movie soundtracks. DeepMind’s AI combines audio descriptions (e.g. “jellyfish pulsating underwater, sea life, ocean”) with the video to create music, sound effects, and even dialogue that match the characters and tone of the video.

Robot chauffeur: : Researchers on the University of Tokyo have developed and trained a “musculoskeletal humanoid” named Musashi to drive a small electric automobile around a test track. Equipped with two cameras replacing human eyes, Musashi can “see” the road ahead and the views reflected in the automobile’s side mirrors.

New AI search engine: : Genspark, a brand new AI-powered search platform, uses generative AI to create custom summaries in response to queries. To date, $60 million has been raised from investors including Lanchi Ventures; In its latest round of financing, the corporate valued it at $260 million post-acquisition, which is decent considering Genspark competes with rivals like Perplexity.

How much does ChatGPT cost?: How much does ChatGPT, OpenAI’s ever-expanding AI-powered chatbot platform, cost? Answering this query is tougher than you think that. To keep track of different ChatGPT subscription options available, we have prepared an updated ChatGPT pricing guide.

Science article of the week

Autonomous vehicles face an infinite number of edge cases, depending on location and situation. If you are driving on a two-lane road and someone activates their left turn signal, does that mean they’ll change lanes? Or that it is best to pass them on? The answer may depend upon whether you are driving on I-5 or the highway.

A gaggle of researchers from Nvidia, USC, UW and Stanford show in a paper just published in CVPR that many ambiguous or unusual circumstances might be solved by, should you can consider it, asking an AI to read the local drivers’ handbook.

Their Big Tongue Driver Assistant or LLaDa, gives the LLM access – even without the power to specify – driving instructions for a state, country or region. Local rules, customs or signs might be found in the literature, and when an unexpected circumstance occurs, e.g. a horn, traffic lights or a flock of sheep, an appropriate motion is generated (stop, stop, honk).

This is certainly not an entire, comprehensive driving system, nevertheless it shows another path to a “universal” driving system that also encounters surprises. Or perhaps it is a way for the remainder of us to search out out why people honk at us after we visit unknown sites.

Model of the week

On Monday, Runway, an organization that creates generative AI tools aimed toward creators of film and image content, presented Gen-3 Alpha. Trained on an enormous variety of images and videos from each public and internal sources, Gen-3 can generate video clips based on text descriptions and still images.

Runway claims Gen-3 Alpha delivers “significant” improvements in generation speed and fidelity over Runway’s previous video flagship, Gen-2, in addition to precise control over the structure, style and motion of the videos it creates. Runway says Gen-3 can be customized to offer more “stylistically controlled” and consistent characters, guided by “specific artistic and narrative requirements.”

The Gen-3 Alpha has its limitations – including the indisputable fact that footage lasts a maximum of 10 seconds. But Runway co-founder Anastasis Germanidis guarantees that that is just the primary of several video-generating models to come back in a family of next-generation models trained on Runway’s improved infrastructure.

Gen-3 Alpha is the most recent of several generative video systems to hit the scene in recent months. Others include OpenAI’s Sora, Luma’s Dream Machine, and Google’s Veo. Together, they threaten to upend the film and tv industry as we understand it – assuming they will overcome copyright challenges.

Take the bag

AI won’t take your next McDonald’s order.

McDonald’s this week announced that it should remove automated order-taking technology, which the fast food chain has been testing for the higher a part of three years, from greater than 100 of its restaurants. The technology — developed with IBM and installed in drive-thru restaurants — became popular last 12 months due to its tendency to misunderstand customers and make mistakes.

Recent piece in Takeout suggests that artificial intelligence is losing its grip on fast food operators, who’ve recently expressed enthusiasm for the technology and its potential to extend efficiency (and reduce labor costs). Presto, a significant player in the AI-powered drive-thru lane market, recently lost a significant customer, Del Taco, and is facing mounting losses.

The problem is inaccuracy.

McDonald’s CEO Chris Kempczinski he said CNBC in June 2021 found that its voice recognition technology was accurate about 85% of the time, but that it needed to be assisted by human staff for about one in five orders. Meanwhile, in keeping with Takeout, one of the best version of the Presto system processes only about 30% of orders without human assistance.

So, so long as artificial intelligence is there decimating some segments of the gig economy appear to think that certain jobs – especially people who require understanding a wide range of accents and dialects – can’t be automated. At least for now.

Technology

The latest model AI Google Gemma can work on phones

It grows “open” AI Google, Gemma, grows.

While Google I/O 2025 On Tuesday, Google removed Gemma 3N compresses, a model designed for “liquid” on phones, laptops and tablets. According to Google, available in a preview starting on Tuesday, Gemma 3N can support sound, text, paintings and flicks.

Models efficient enough to operate in offline mode and without the necessity to calculate within the cloud have gained popularity within the AI community lately. They will not be only cheaper to make use of than large models, but they keep privacy, eliminating the necessity to send data to a distant data center.

During the speech to I/O product manager, Gemma Gus Martins said that GEMMA 3N can work on devices with lower than 2 GB of RAM. “Gemma 3N shares the same architecture as Gemini Nano, and is also designed for incredible performance,” he added.

In addition to Gemma 3N, Google releases Medgemma through the AI developer foundation program. According to Medgemma, it’s essentially the most talented model to research text and health -related images.

“Medgemma (IS) OUR (…) A collection of open models to understand the text and multimodal image (health),” said Martins. “Medgemma works great in various imaging and text applications, thanks to which developers (…) could adapt the models to their own health applications.”

Also on the horizon there may be SignGEMMA, an open model for signaling sign language right into a spoken language. Google claims that Signgemma will allow programmers to create recent applications and integration for users of deaf and hard.

“SIGNGEMMA is a new family of models trained to translate sign language into a spoken text, but preferably in the American sign and English,” said Martins. “This is the most talented model of understanding sign language in history and we are looking forward to you-programmers, deaf and hard communities-to take this base and build with it.”

It is value noting that Gemma has been criticized for non -standard, non -standard license conditions, which in accordance with some developers adopted models with a dangerous proposal. However, this didn’t discourage programmers from downloading Gemma models tens of tens of millions of times.

.

(Tagstransate) gemma

Technology

Trump to sign a criminalizing account of porn revenge and clear deep cabinets

President Donald Trump is predicted to sign the act on Take It Down, a bilateral law that introduces more severe punishments for distributing clear images, including deep wardrobes and pornography of revenge.

The Act criminalizes the publication of such photos, regardless of whether or not they are authentic or generated AI. Whoever publishes photos or videos can face penalty, including a advantageous, deprivation of liberty and restitution.

According to the brand new law, media firms and web platforms must remove such materials inside 48 hours of termination of the victim. Platforms must also take steps to remove the duplicate content.

Many states have already banned clear sexual desems and pornography of revenge, but for the primary time federal regulatory authorities will enter to impose restrictions on web firms.

The first lady Melania Trump lobbyed for the law, which was sponsored by the senators Ted Cruz (R-TEXAS) and Amy Klobuchar (d-minn.). Cruz said he inspired him to act after hearing that Snapchat for nearly a 12 months refused to remove a deep displacement of a 14-year-old girl.

Proponents of freedom of speech and a group of digital rights aroused concerns, saying that the law is Too wide And it will probably lead to censorship of legal photos, similar to legal pornography, in addition to government critics.

(Tagstransate) AI

Technology

Microsoft Nadella sata chooses chatbots on the podcasts

While the general director of Microsoft, Satya Nadella, says that he likes podcasts, perhaps he didn’t take heed to them anymore.

That the treat is approaching at the end longer profile Bloomberg NadellaFocusing on the strategy of artificial intelligence Microsoft and its complicated relations with Opeli. To illustrate how much she uses Copilot’s AI assistant in her day by day life, Nadella said that as a substitute of listening to podcasts, she now sends transcription to Copilot, after which talks to Copilot with the content when driving to the office.

In addition, Nadella – who jokingly described her work as a “E -Mail driver” – said that it consists of a minimum of 10 custom agents developed in Copilot Studio to sum up E -Mailes and news, preparing for meetings and performing other tasks in the office.

It seems that AI is already transforming Microsoft in a more significant way, and programmers supposedly the most difficult hit in the company’s last dismissals, shortly after Nadella stated that the 30% of the company’s code was written by AI.

(Tagstotransate) microsoft

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

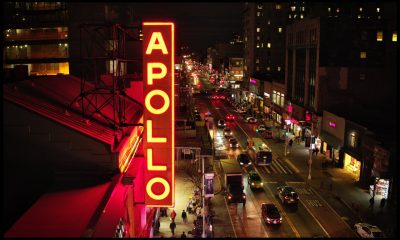

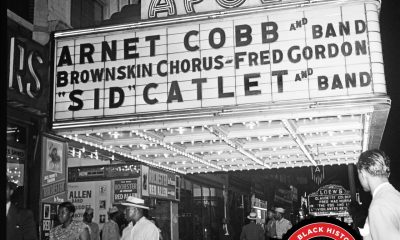

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary