Technology

Women in AI: UC Berkeley’s Brandie Nonnecke says investors should push for responsible AI practices

To give women AI academics and others their well-deserved – and overdue – time in the highlight, TechCrunch is launching a series of interviews specializing in the extraordinary women who’re contributing to the AI revolution. As the AI boom continues, we are going to publish several articles all year long, highlighting key work that usually goes unnoticed. Read more profiles here.

Brandy Nonnecke is the founder and director of the CITRIS Policy Lab on the University of California, Berkeley, which supports interdisciplinary research to reply questions on the role of regulation in promoting innovation. Nonnecke can also be co-director of the Berkeley Center for Law and Technology, where she leads projects on artificial intelligence, platforms and society, and the UC Berkeley AI Policy Hub, an initiative to coach researchers to develop effective AI governance and policy frameworks.

In his spare time, Nonnecke hosts the TecHype video and podcast series, which examines emerging technology policies, regulations and laws, provides insight into the advantages and risks, and identifies strategies for putting technology to good use.

Questions & Answers

Briefly speaking, how did you start in artificial intelligence? What drew you to the sphere?

I actually have been involved in the responsible management of artificial intelligence for almost a decade. My background in technology, public policy and their intersection with social impact drew me to this field. Artificial intelligence is already ubiquitous and has a huge effect on our lives – for higher and for worse. I’m committed to contributing significantly to society’s ability to make use of this technology for good, reasonably than standing on the sidelines.

What work are you most happy with (in AI)?

I’m really happy with two things we achieved. First, the University of California was the primary university to determine a responsible AI policy and governance structure to higher ensure responsible purchasing and use of AI. We take seriously our commitment to serve society in a responsible manner. I had the honour of co-chairing the University of California’s Presidential Working Group on Artificial Intelligence and its subsequent everlasting Council on Artificial Intelligence. In these roles, I used to be capable of gain first-hand experience fascinated by how best to implement our Responsible AI principles to guard our faculty, staff, students and the broader communities we serve. Second, I consider it is amazingly vital that society understands emerging technologies and the actual advantages and risks related to them. We launched TecHype, a video and podcast series that explains emerging technologies and provides guidance for effective technical and policy interventions.

How do you take care of the challenges of the male-dominated tech industry, and by extension, the male-dominated AI industry?

Be curious, persistent and undaunted by imposter syndrome. I consider it is amazingly vital to hunt down mentors who support diversity and inclusion, and to supply the identical support to others entering this field. Building inclusive communities in the tech industry is a robust approach to share experiences, advice and encouragement.

What advice would you give to women wanting to begin working in the AI industry?

For women entering the sphere of artificial intelligence, my advice is threefold: continuously seek knowledge, because artificial intelligence is a rapidly evolving field. Take advantage of networking because contacts will open doors to opportunities and offer invaluable support. Support yourself and others, because your voice is important in shaping an inclusive, equitable future for AI. Remember that your unique perspectives and experiences enrich the sphere and drive innovation.

What are probably the most pressing issues facing artificial intelligence because it evolves?

I consider that some of the pressing issues facing the evolution of artificial intelligence isn’t to be misled by the most recent hype cycles. We’re seeing this now with generative AI. Sure, generative AI is a major advance and may have a huge effect – good and bad. However, there are other types of machine learning in use today that secretly make decisions which have a direct impact on every person’s ability to exercise their rights. Instead of specializing in the most recent marvels of machine learning, it’s more vital that we give attention to how and where machine learning is being applied, no matter its technological prowess.

What issues should AI users pay attention to?

Users of AI should pay attention to issues related to data privacy and security, the potential for bias in AI decision-making, and the importance of transparency in the operation of AI systems and decision-making. Understanding these issues can enable users to demand more accountable and fair AI systems.

What is the very best approach to construct AI responsibly?

Building artificial intelligence responsibly requires taking ethical issues into consideration at every stage of development and implementation. This includes diverse stakeholder engagement, transparent methodologies, bias management strategies and ongoing impact assessments. Prioritizing the general public good and ensuring the event of AI technologies which might be grounded in human rights, equity and inclusion are essential.

How can investors higher promote responsible AI?

This is a vital query! For a protracted time, we never talked explicitly concerning the role of investors. I am unable to express enough how influential investors are! I consider that the claim that “regulation stifles innovation” is overused and infrequently unfaithful. Instead, I strongly consider that smaller firms can gain a late-moment advantage and learn from larger AI firms which might be developing responsible AI practices and guidance from academia, civil society and government. Investors have the ability to shape the direction of the industry, making responsible AI practices a critical factor in investment decisions. This includes supporting initiatives focused on addressing societal challenges through AI, promoting diversity and inclusion in the AI workforce, and advocating for strong governance and technical strategies that help ensure the advantages of AI technologies profit society as a complete.

Technology

Why the new Porn Law Anti-Revenge disturbs experts on freedom

Proponents of privacy and digital rights increase the alarms over the law, which many would expect to support: federal repression of pornography of revenge and deep cabinets generated by AI.

The newly signed Act on Take IT Down implies that the publishing of unjustified clear photos-vigorous or generated AI-I gives platforms only 48 hours to follow the request to remove the victim or face responsibility. Although widely praised as an extended win for victims, experts also warned their unclear language, loose standards for verification of claims, and a decent compatibility window can pave the way for excessive implementation, censorship of justified content and even supervision.

“Large -scale model moderation is very problematic and always ends with an important and necessary assessment speech,” said India McKinney, Federal Director at Electronic Frontier Foundation, a corporation of digital rights.

Internet platforms have one 12 months to determine a means of removing senseless intimate images (NCII). Although the law requires that the request to be removed comes from victims or their representatives, he only asks for a physical or electronic signature – no photo identifier or other type of verification is required. This might be geared toward reducing barriers for victims, but it could possibly create the possibility of abuse.

“I really want to be wrong in this, but I think there will be more requests to take photos of Queer and trance people in relationships, and even more, I think it will be consensual porn,” said McKinney.

Senator Marsha Blackburn (R-TN), a co-person of the Take IT Down Act, also sponsored the Safety Act for youngsters, which puts a burden on platforms to guard children from harmful online content. Blackburn said he believed Content related to transgender individuals It is harmful to children. Similarly, the Heritage Foundation – conservative Think Tank behind the 2025 project – also has he said that “keeping the content away from children protects children.”

Due to the responsibility with which they encounter platforms, in the event that they don’t take off the image inside 48 hours of receiving the request: “By default it will be that they simply take it off without conducting an investigation to see if it is NCII or whether it is another type of protected speech, or whether it is even important for the person who submits the application,” said McKinney.

Snapchat and Meta said that they support the law, but none of them answered TechCrunch’s request for more information on how they’d check if the person asking for removal is a victim.

Mastodon, a decentralized platform, which hosts his own flagship server, to which others can join, told Techcrunch that he could be inclined to remove if he was too difficult to confirm the victim.

Mastodon and other decentralized platforms, comparable to blues or pixfed, could be particularly exposed to the cold of the 48-hour removal rule. These networks are based on independently supported servers, often run by non -profit organizations or natural individuals. Under the law, FTC may treat any platform that is just not “reasonably consistent” with demands of removal as “unfair or deceptive action or practice” – even when the host is just not a business subject.

“It’s disturbing on his face, but especially when he took the FTC chair unprecedented Steps to politicize The agency and clearly promised to make use of the agency’s power to punish platforms and services on ideologicalIn contrast to the rules, the basics, “cyberspace initiative, a non -profit organization dedicated to the end of pornography of revenge, she said in statement.

Proactive monitoring

McKinney predicts that the platforms will start moderating content before distribution, so in the future they’ve fewer problematic posts.

Platforms already use artificial intelligence to observe harmful content.

Kevin Guo, general director and co -founder of the content detection startup, said that his company cooperates with web platforms to detect deep materials and sexual materials of kids (CSAM). Some of the Hive clients are Reddit, Giphy, Vevo, BlueSky and Bereal.

“In fact, we were one of the technology companies that supported this bill,” said Guo Techcrunch. “This will help solve some quite important problems and force these platforms to take more proactive solutions.”

The HIVE model is software as a service, so starting doesn’t control how the platforms use their product to flag or delete content. But Guo said that many shoppers insert the API Hive interface when sent to monitoring before anything is distributed to the community.

Reddit spokesman told Techcrunch that the platform uses “sophisticated internal tools, processes and teams for solving and removal”. Reddit also cooperates with the NON -SWGFL organization in an effort to implement the Stopncia tool, which scans live traffic seeking matches with a database of known NCII and removes accurate fittings. The company didn’t share how it is going to be sure that the person asking for removal is a victim.

McKinney warns that this kind of monitoring can expand to encrypted messages in the future. Although the law focuses on public or semi -public dissemination, it also requires the platforms “removing and making reasonable efforts to prevent” senseless intimate images from re -translating. He claims that this will likely encourage proactive scanning of all content, even in encrypted spaces. The law doesn’t contain any sculptors for encrypted services of encrypted messages, comparable to WhatsApp, Signal or IMessage.

Meta, Signal and Apple didn’t answer TechCrunch for more details about their plans for encrypted messages.

Wider implications of freedom of speech

On March 4, Trump provided a joint address to the congress, wherein he praised the Take It Down act and said he couldn’t wait to sign it.

“And I also intend to use this bill for myself if you don’t mind,” he added. “There is nobody who is treated worse than I do online.”

While the audience laughed at the comment, not everyone considered it a joke. Trump was not ashamed of suppressing or retarding against unfavorable speech, no matter whether it’s the mainstream marking “enemies of individuals” Except for Associated Press from the oval office despite the court order or Financing from NPR and PBS.

Trump administration on Thursday Barred Harvard University From the reception of foreign students, the escalation of the conflict, which began after Harvard refused to follow Trump’s demands to make changes to his curriculum and eliminate, amongst others, content related to Dei. In retaliation, Trump froze federal funds at Harvard and threatened to repeal the status of the tax exemption from the university.

“At a time when we see that school councils are trying to prohibit books and see that some politicians very clearly deal with the types of content that people do not want to ever see, regardless of whether it is a critical theory of breed, or information about abortion or information about climate change …” McKinney said.

(Tagstotransate) Censorship

Technology

PO clarous Director General Zoom also uses AI avatar during a quarterly connection

General directors at the moment are so immersed in artificial intelligence that they send their avatars to cope with quarterly connections from earnings as a substitute, a minimum of partly.

After AI Avatar CEO CEO appeared on the investor’s conversation firstly of this week, the final director of Zoom Eric Yuan also followed them, also Using his avatar for preliminary comments. Yuan implemented his non -standard avatar via Zoom Clips, an asynchronous company video tool.

“I am proud that I am one of the first general directors who used the avatar in a call for earnings,” he said – or fairly his avatar. “This is just one example of how Zoom shifts the limits of communication and cooperation. At the same time, we know that trust and security are necessary. We take seriously the content generated AI and build strong security to prevent improper use, protect the user’s identity and ensure that avatars are used responsibly.”

Yuan has long been in favor of using avatars at meetings and previously said that the corporate goals to create Digital user twins. He just isn’t alone on this vision; The CEO of transcript -powered AI, apparently, trains its own avatar Share the load.

Meanwhile, Zoom said he was doing it Avatar non -standard function available To all users this week.

(Tagstranslat) meetings AI

Technology

The next large Openai plant will not be worn: Report

Opeli pushed generative artificial intelligence into public consciousness. Now it might probably develop a very different variety of AI device.

According to WSJ reportThe general director of Opeli, Altman himself, told employees on Wednesday that one other large product of the corporate would not be worn. Instead, it will be compact, without the screen of the device, fully aware of the user’s environment. Small enough to sit down on the desk or slot in your pocket, Altman described it each as a “third device” next to MacBook Pro and iPhone, in addition to “Comrade AI” integrated with on a regular basis life.

The preview took place after the OpenAI announced that he was purchased by IO, a startup founded last 12 months by the previous Apple Joni Ive designer, in a capital agreement value $ 6.5 billion. I will take a key creative and design role at Openai.

Altman reportedly told employees that the acquisition can ultimately add 1 trillion USD to the corporate conveyorsWearing devices or glasses that got other outfits.

Altman reportedly also emphasized to the staff that the key would be crucial to stop the copying of competitors before starting. As it seems, the recording of his comments leaked to the journal, asking questions on how much he can trust his team and the way rather more he will be able to reveal.

(Tagstotransate) devices

-

Press Release1 year ago

Press Release1 year agoU.S.-Africa Chamber of Commerce Appoints Robert Alexander of 360WiseMedia as Board Director

-

Press Release1 year ago

Press Release1 year agoCEO of 360WiSE Launches Mentorship Program in Overtown Miami FL

-

Business and Finance12 months ago

Business and Finance12 months agoThe Importance of Owning Your Distribution Media Platform

-

Business and Finance1 year ago

Business and Finance1 year ago360Wise Media and McDonald’s NY Tri-State Owner Operators Celebrate Success of “Faces of Black History” Campaign with Over 2 Million Event Visits

-

Ben Crump1 year ago

Ben Crump1 year agoAnother lawsuit accuses Google of bias against Black minority employees

-

Theater1 year ago

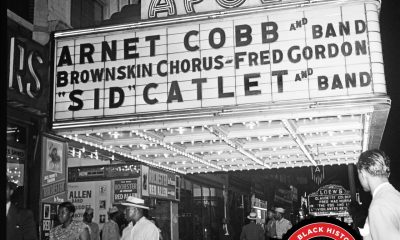

Theater1 year agoTelling the story of the Apollo Theater

-

Ben Crump1 year ago

Ben Crump1 year agoHenrietta Lacks’ family members reach an agreement after her cells undergo advanced medical tests

-

Ben Crump1 year ago

Ben Crump1 year agoThe families of George Floyd and Daunte Wright hold an emotional press conference in Minneapolis

-

Theater1 year ago

Theater1 year agoApplications open for the 2020-2021 Soul Producing National Black Theater residency – Black Theater Matters

-

Theater12 months ago

Theater12 months agoCultural icon Apollo Theater sets new goals on the occasion of its 85th anniversary